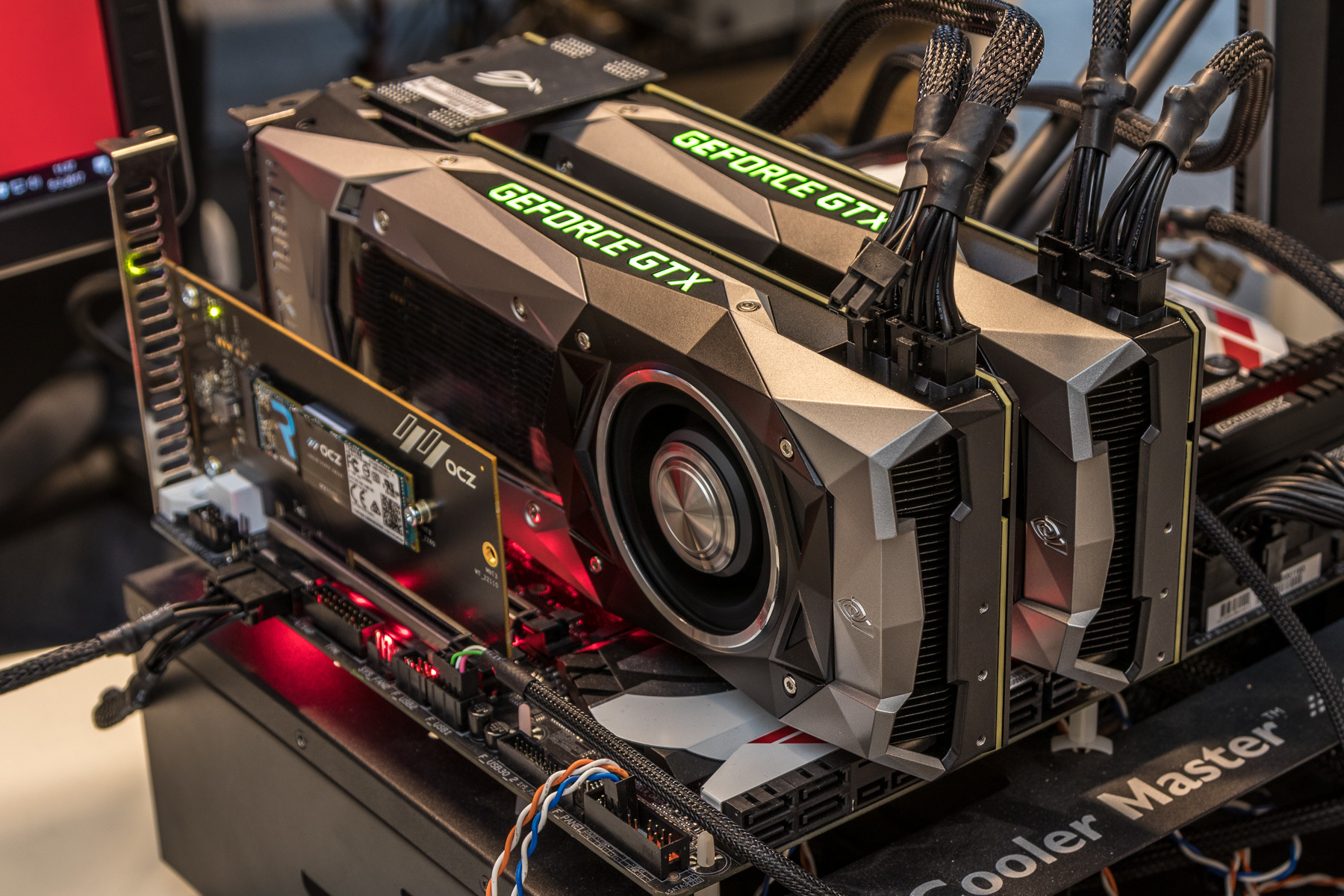

Overall then at the diagram level the GP104 SM looks almost identical to the Maxwell SM, but with one exception: the PolyMorph Engine. Finally, we have the resources shared throughout the whole SM: the 96KB shared memory, the instruction cache, and not pictured on NVIDIA’s diagrams, the 4 FP64 CUDA cores and 1 FP16x2 CUDA core. Meanwhile shared between every pair of sub-partitions is 4 texture units and the combined L1/texture cache, again unchanged from Maxwell.

There are two dispatch ports per warp schedule, so when an instruction stream allows it, a warp scheduler can extract a limited amount of ILP with an instruction stream by issuing a second instruction to an unused resource. We’re still looking at a single SM partially sub-divided into four pieces, each containing a single warp scheduler that’s responsible for feeding 32 CUDA cores, 8 load/store units, and 8 Special Function Units, backed by a 64KB register file. Simply named the SM for this generation – NVIDIA has ditched the generational suffix due to the potential for confusion with the used-elsewhere SMP – the GP104 SM is very similar to the Maxwell SM. Which is not to say that Pascal is Maxwell on 16nm – this is very much a major feature update – but when it comes to discussing the core SM architecture itself, there is significant common ground with Maxwell. After making more radical changes to their architecture with Maxwell, for Pascal NVIDIA is taking a bit of a breather. Looking at an architecture diagram for GP104, Pascal ends up looking a lot like Maxwell, and this is not by chance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed